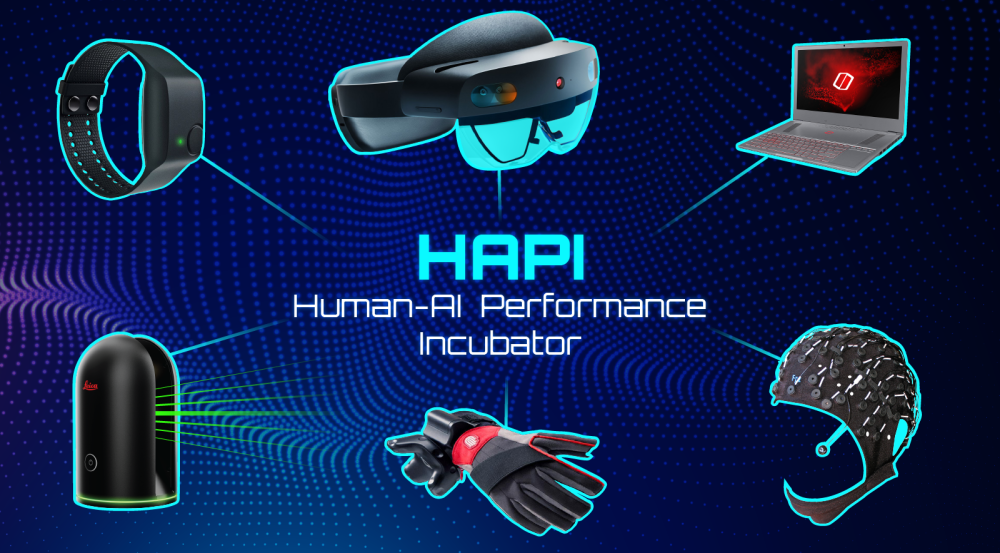

Human-AI Performance Incubator

Human-AI teaming is an emerging area of research that addresses how the crucial interaction between humans and artificial intelligence (AI) impacts mission readiness. In the AI Software Architectures and Algorithms Group, we believe that the focus should be on business and/or mission success in the presence of technology innovation, rather than on technical performance alone. Our approach is to leverage the strengths and address the weaknesses of the human-AI team.

To that end, we’ve established the Human-AI Performance Incubator (HAPI) Lab to accelerate progress in AI teaming. The HAPI Lab is a physical space where collaborators can develop the capabilities needed to improve teaming and increase the effectiveness of AI in everyday operations by leveraging Lincoln Laboratory work, products, and access to industry transition partners. The space offers a range of equipment necessary to measure human-AI teams and develop metrics to evaluate effectiveness. A sampling of our equipment includes augmented and virtual reality–capable glasses, compute infrastructure, wearable input devices, environmental mapping devices, electroencephalography (EEG) cognitive load measurement, and biometric sensing, all integrated in a temperature-controlled laboratory space with state-of-the-art IT infrastructure.

Our objective is to foster a community where commercial, academic, and government researchers in the human-AI performance space can collaborate to drive innovation.

Our researchers recently instrumented the lab with a new capability: real-time data capture of highly accurate cursor control through hands-free eye tracking. This capability allows us to collect physiological biometrics as a user interacts with UI displays and AI tools, and is foundational for capturing quantitative human performance.