Technology Office Challenges

To foster innovation that may lead to new R&D projects at the Laboratory, the Technology Office designs challenges that inspire multidisciplinary teams to invent creative solutions to emerging problems affecting national security.

Begun in 2010, the challenges have sparked new concepts for improving multisensor acquisition of data for situational awareness, building novel hardware, detecting "fake news," developing fabrics as wearable sensors, and underwater sensing of objects.

The procedure for challenges has remained the same: proposals to answer the challenge are submitted, evaluated, and narrowed down to two or three to fund. Then, these finalists present the systems they developed to solve the challenge, and a winner is declared.

Over the years, the process has evolved into a crowdsourced one enabled by an internal online forum that allows teams to post their proposals for the current challenge and the Laboratory community to comment upon and rate the proposals.

CHALLENGES

2024

5T Casualty Care Challenge

The 5Ts — triage, track, transmit, transport, and train — encompass key aspects of providing care after a mass casualty event. This Challenge aimed to provide solutions to the most pressing problems in mass casualty care in austere environments. Participants heard perspectives from military and government stakeholders on challenges in this space. Chosen from a broad spectrum of compelling proposals, the winning idea involved the use of whole blood donations in the field and received seed funding to begin technology development.

2023

MIRAGE

The goal of the Mixed Reality for Augmenting Group Experiences (MIRAGE) Challenge was to acquaint Laboratory staff with the latest capabilities of augmented-reality eyewear systems. Teams were tasked with using the HoloLens 2 and a custom Laboratory-built interactive game to compete in complex challenges and puzzles, in which an operator wore the HoloLens headset and communicated with a handler and their support team about the course objectives. Participating in the MIRAGE Challenge allowed staff to explore how mixed and augmented reality can enhance both their performance to accomplish objectives and their research and development at the Laboratory.

2022

Fool Me Challenge: Part Two

We hosted the second phase of the Fool Me Challenge — a hackathon-style contest to detect data poisoning. The challenge organizers covertly modified or “poisoned” a series of overhead imagery data to taint machine learning classifiers. The teams were asked to design robust classification algorithms capable of correctly classifying test imagery and detecting which images had been modified. The challenge consisted of 35 participants across seven teams and was conducted virtually though the Gather.Town web interface. The lessons learned from the Fool Me Challenge will help the Laboratory better understand trends in information manipulation, anticipate the broader implications to national security, and develop mitigation strategies.

2021

Technologies to Enable Future Space

Space assets are becoming increasingly important to national security and commercial applications, from weather monitoring to deep space exploration. We asked the Laboratory community to generate ideas that advance our capabilities to design, build, and field advanced systems that meet the needs of future space mission. After considering 29 compelling ideas, we selected 3D-Printed Shielding for High-Performance Radiation-Hardened Satellites as the challenge winner. Space is a harsh place for electronic systems. This technology uses custom 3D-printing technology to deposit material on a satellite's electronic components to protect them from radiation damage. We are excited about the ongoing development of this proposal and the advancement of the other ideas that increase our ability to field novel space systems.

Read the news story on the winning proposal ─ “3D-printed shielding can help protect satellites from radiation damage.”

2020

Fool Me Challenge

In 2020 and 2021, we planned a two-part challenge to address the threats to national security posed by fake data and influence operations. The first asked participants to identify and generate examples of data manipulation that utilize emerging techniques and avoid traditional methods for concealment, deception, or camouflage. Two winners were named: “Foreign Fake Factory: Generation of Fake IO Using Transformer Models,” and “Hiding in Plain Sight: Poisoning Attack on Land Use Classification.”

The datasets from the land use classification winning entry were adapted for use in the second part of the challenge — a virtual hackathon where teams developed methods to defend against poisoning, a form of data manipulation in which an adversary plants false information into machine learning training data. The hackathon winner was team VeritaSerum, who developed an algorithm that could successfully detect manipulated images across multiple poisoned overhead imagery datasets. Their algorithm used two techniques called outlier detection and ensembles.

Although the hackathon declared only one winning team, all of the techniques developed during the challenge are potential assets for the Laboratory’s future research into the threat of influence operations and data manipulation.

2019

Technology for Advanced Fibers, Fabrics, and Extruded Elements (TAFFEE)

The TAFFEE challenge tasked teams with leveraging the capabilities of the Defense Fabric Discovery Center to develop advanced fiber concepts relevant to the Laboratory mission space. The winning technology was the Wearable Triboelectric Generator, which was designed to harvest the static electricity that accumulates on clothing. Fibers embedded in fabrics would collect the electricity that is generated when natural body motion causes two different materials to rub against each other. This energy harvesting at the fiber scale has the long-term potential to enable integration of low-power sensors and actuators in future functional fabrics.

2018

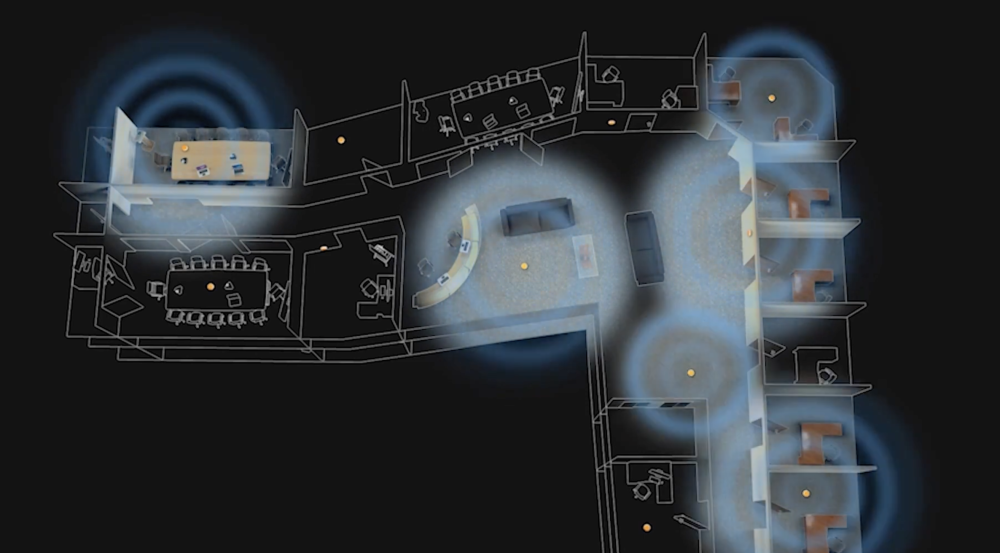

Special Challenge: Disaster Preparedness

We sought concepts that could help first responders in the immediate aftermath of a disaster when information from reliable sources is crucial but local infrastructure, such as power or cellular service, may be unavailable. Tracking the locations of all rescuers and making that data available in real time allows rescue efforts to save lives. The winner was the Low-cost Localization using Distributed Adaptable-Response Transponders (LLDART) project, which proposed a deployable navigation network of small, low-cost radio transponders carried by rescuers to exchange their location information. By fusing this information with that from traditional sensors, such as an inertial measurement unit and GPS when available, personnel in command posts can estimate the locations of rescuers in the field.

2017

Breaking News or Broken News? A "Fake Media" Hackathon

Unsubstantiated, sometimes intentionally misleading, media content undermines democracy through the erosion of public trust and misunderstanding of important information. When events, actions, and government data are distorted in false reporting, the ability of the public to discern truth becomes more and more strained. To combat this important problem, the Technology Office challenged the Laboratory community to build automatic detectors of unreliable media in its first-ever hackathon in 2017. The challenge was an opportunity for multidisciplinary teams from across the Laboratory to explore machine learning methods for analyzing multimedia data to identify false versus reliable news.

Read the news story on the hackathon ─ “Using machine learning to detect fake news”

2016

CANUSEA

Could staff devise an autonomous, coordinated approach to finding black boxes submerged after an airplane crash over the ocean? This was the question posed by the 2016 challenge, the Coordinated Autonomous Novel Underwater Sensing and Exploitation Ability, or CANUSEA. Two finalist teams conducted demonstrations of their concepts at a local lake, and their innovative concepts may lead to new research for Laboratory staff who are exploring strategies for undersea operations.